How AI Collaboration, Not Control, Unlocks Better AI Outcomes

Written by Pax Koi, creator of Plainkoi — tools and essays for clear thinking in the age of AI.

AI Disclosure: This article was co-developed with the assistance of ChatGPT (OpenAI) and finalized by Plainkoi.

TL;DR

Trying to control AI often leads to frustration. But when you shift into collaboration—clear tone, structure, and intent—you unlock better results and sharper thinking. AI reflects you. Speak like a partner, not a commander.

The Vending Machine Mindset

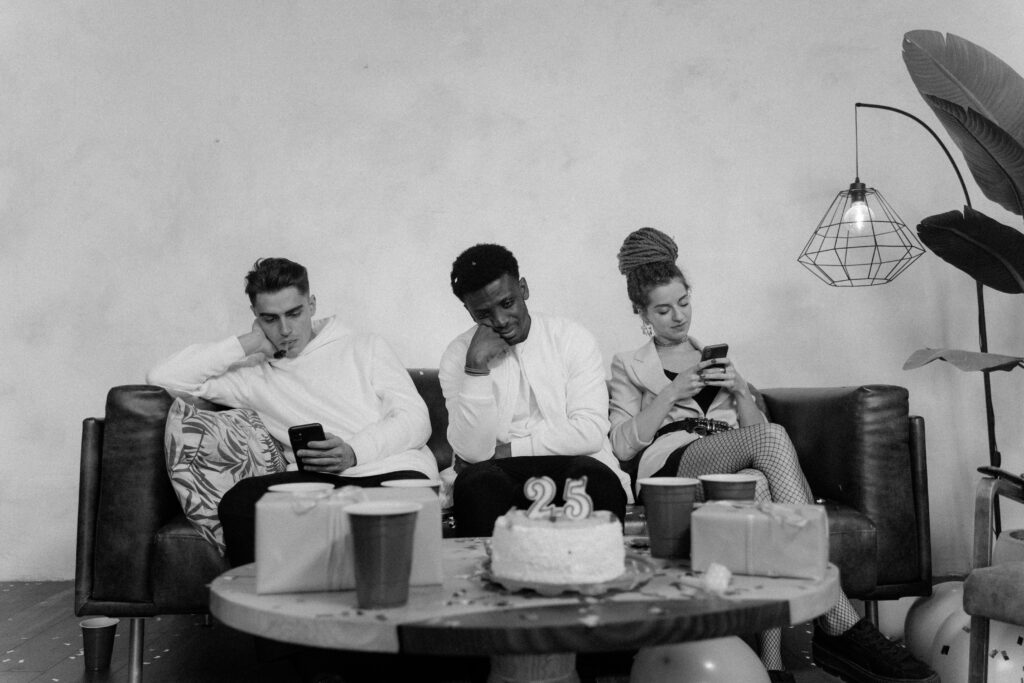

Using AI still feels like a gamble for many people. You type in a prompt like you’re feeding a vending machine and cross your fingers. Maybe you’ll get brilliance. Maybe you’ll get nonsense. Usually, it’s something in between.

And when it misses?

“Why is it hallucinating again?”

“Why can’t it just follow directions?”

But here’s the twist: what if it’s not the machine that’s broken?

What if it’s the way we’re using it?

Maybe the problem isn’t the tool—it’s the frame.

We’re treating a creative partner like a disobedient appliance. And the more we try to “control” it, the less we actually get from it.

It’s time to stop commanding and start collaborating.

The AI Isn’t Stubborn—You’re Just Being Vague

Let’s get one thing straight: AI isn’t being difficult. It’s being literal. Painfully, robotically literal.

Tools like ChatGPT, Claude, or Gemini don’t read between the lines. They don’t pick up on tone unless you tell them. They don’t intuit your intent. They don’t guess. They just… execute.

So when you type something like:

“Write something short but also explain everything and make it light but professional and kind of emotional.”

You’ve basically handed the AI a knot of contradictions and asked it to make origami.

What comes out isn’t bad. It’s exactly what you asked for—just without the clarity to make it good.

If you say “Make it quick,” the AI might give you three sentences when you meant 300 words. It needs you to spell it out.

The issue isn’t its logic.

The issue is your language.

Stop Hacking. Start Communicating.

AI advice is full of “prompt hacks”:

- “Ask it to roleplay as a 19th-century novelist turned data scientist!”

- “Use this secret formula!”

Fun? Sure. Useful? Occasionally.

But if you really want consistent, high-quality results, the fix isn’t tricks. It’s clarity.

Prompting well isn’t about outsmarting the model. It’s about communicating clearly with something that only understands exactly what you say.

It won’t rescue you from your own contradictions. It won’t magically resolve your vagueness.

It reflects your thinking—flaws and all.

Prompting isn’t spellcasting. It’s a mirror.

Show, Don’t Just Say

Let’s break this down with two examples:

Writing example:

Bad Prompt:

“Write something smart about leadership but kind of funny. Not too long, but make it deep.”

Sounds natural, right? Like something you’d say to a friend. But to an AI, it’s a mess:

- “Smart”—how? Academic? Insightful? Witty?

- “Funny”—stand-up funny? Dad-joke funny?

- “Deep”—philosophical? Personal?

Better Prompt:

“Write a 3-paragraph article on leadership that blends wit and wisdom—like something a clever mentor might say. Keep the tone conversational with a light touch of humor.”

Same idea. Same length. But suddenly, the model has a map to follow. Tone, length, style, mood—it’s all there.

Lifestyle example:

Bad Prompt:

“Plan a fun weekend.”

Better Prompt:

“Plan a relaxing weekend for two, including one outdoor activity and a budget-friendly dinner, in a cheerful tone.”

This isn’t about being robotic.

It’s about being readable.

Control vs. Collaboration

When you change your mindset, your whole interaction changes:

| Mindset | Question | Example |

|---|---|---|

| Against AI | “Why won’t it do what I want?” | “Write something cool.” |

| “How do I trick it?” | “Act like a genius and give me something amazing.” | |

| “It failed.” | “This is useless.” | |

| With AI | “Did I clearly say what I want?” | “Write a 200-word blog post with a friendly tone.” |

| “How can I guide it better?” | “Give three bullet points with playful examples.” | |

| “What part of my prompt was fuzzy?” | “Was I specific about tone or audience?” |

This shift is the unlock.

You stop fighting with the AI.

You start co-creating.

Because AI doesn’t resist you—it reflects you.

Prompting Makes You Smarter (Really)

Here’s the underrated part: good prompting doesn’t just get you better outputs.

It sharpens your mind.

To prompt clearly, you have to think clearly:

- What am I actually trying to say?

- Who is this for?

- How should it feel to read?

You start noticing your own vagueness. You catch where you’re hedging or asking for too much at once. Prompting becomes less of a task—and more of a mental practice.

The better you prompt, the better you think.

Collaboration Is a Skill, Not a Shortcut

Co-creating with AI isn’t lazy. It’s not outsourcing. It’s a dialogue.

Imagine the AI as a turbo-charged intern: super fast, wildly creative, but incredibly literal. If your instructions are off, so is the result.

To collaborate well, you have to show up with intention:

- Be clear about your goals

- Give examples or formats

- Set tone and structure

- Review what it gives you—then refine

You won’t nail it on the first try. That’s okay. It’s a process. You explore, revise, and build—just like with any creative teammate.

Prompting Is the New Literacy

This isn’t just a niche skill for techies or writers.

Prompting is becoming a new kind of literacy.

Students are using it to study. Therapists to generate exercises. Marketers to brainstorm. Everyday people to plan meals, write resumes, or journal more clearly.

The real skill isn’t “prompt engineering.”

It’s clear, flexible thinking made visible through language.

AI just happens to give us instant feedback. And in that mirror, we start to see how we communicate—and where we can grow.

But What About AI’s Flaws?

Let’s not pretend AI is flawless.

It hallucinates. It forgets. It gives generic or repetitive responses. It can sound wooden when your prompt is fuzzy.

But here’s the mirror again: so do we.

When we’re rushed, tired, or vague—we miscommunicate too. The AI just makes those gaps visible.

If the AI’s response feels off, don’t stress—it’s part of learning. Try tweaking one thing, like adding a tone or example, and see how it shifts.

Blame the model less. Get curious more.

That’s where the learning happens.

A Tiny Experiment (Try This Now)

If you want to feel the power of prompting, try this:

- Ask your favorite AI:

“Describe your favorite animal like it’s a Pixar character.” - Then follow up with:

“Now describe it like it’s in a David Attenborough documentary.”

Same concept. Completely different execution.

That’s tone. That’s context. That’s collaboration.

And it’s kind of fun.

Start here: This takes 2 minutes and shows you how your words shape the AI’s response.

Final Thought: Aim for Clarity, Not Control

This isn’t just about AI.

It’s about how we communicate.

When you stop trying to control the outcome and start focusing on expressing yourself clearly, something shifts.

The AI becomes less of a vending machine—and more of a teammate.

Yes, you’ll still get weird outputs sometimes. Yes, you’ll still need to revise. But over time, you’ll get better. Not just at prompting—but at thinking, writing, creating, and reflecting.

So next time the AI gives you a flat or fuzzy response, don’t reach for a cheat code.

Reach for a better prompt.

Rephrase. Refocus. Rethink.

Because the goal isn’t to master the machine.

The goal is to communicate so clearly that collaboration becomes effortless.

And you’re already halfway there.

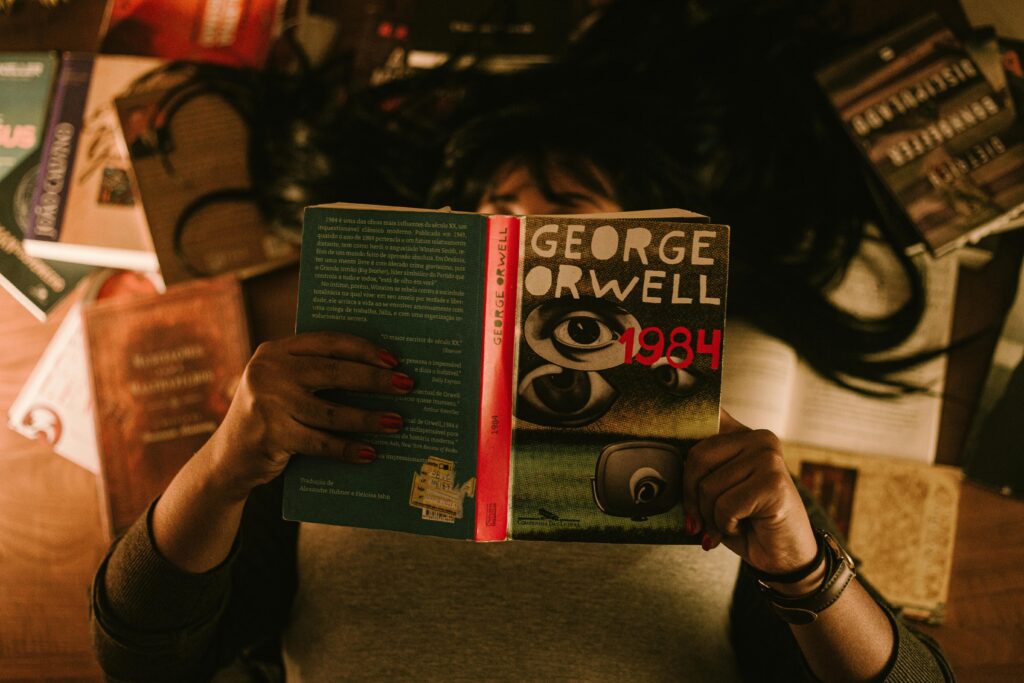

Suggested Reading

Radical Collaboration

James W. Tamm & Ronald J. Luyet, 2004

This book isn’t about AI—it’s about human communication. But its lessons on trust, openness, and shared purpose translate beautifully to prompting. Collaboration thrives when clarity replaces control.

Citation:

Tamm, J. W., & Luyet, R. J. (2004). Radical Collaboration: Five Essential Skills to Overcome Defensiveness and Build Successful Relationships. Harper Business. https://www.harpercollins.com/products/radical-collaboration-james-w-tammronald-j-luyet?variant=32114931531810

Written by Pax Koi, creator of Plainkoi — Tools and essays for clear thinking in the age of AI — with a little help from the mirror itself.

If you’ve found this article helpful and want to support the work behind it, you can explore more tools and mini-kits at Plainkoi on Gumroad. Each one is designed to help you write clearer, more reflective prompts—and keep this project alive.

AI Disclosure: This article was co-developed with the assistance of ChatGPT (OpenAI) and Gemini (Google DeepMind), and finalized by Plainkoi.

© 2025 Plainkoi at CoherePath. Words by Pax Koi.

https://CoherePath.org